Walk into any electronics store or browse any smartphone listing online, and you will be bombarded with camera specifications. “200MP main sensor.” “UltraPixel technology.” “50MP periscope telephoto.” The numbers and brand names come fast, and they are often used in ways designed to impress rather than inform.

Two terms that cause particular confusion are Megapixel and UltraPixel. One is a universal unit of measurement. The other is a proprietary marketing term from HTC. They sound related, and in a sense they are — both describe how a camera sensor captures light — but they represent fundamentally different philosophies about what makes a great photograph.

This guide unpacks both concepts from the ground up, explains the physics of how camera sensors work, explores the real trade-offs between pixel count and pixel size, and helps you understand what actually matters when evaluating a smartphone camera.

Part One: Understanding Pixels and Megapixels

What Is a Pixel?

The word “pixel” is a portmanteau of “picture element.” It is the smallest discrete unit of a digital image — a single dot of color and brightness. Every digital photograph is a grid of pixels. Look at a digital image at 100% zoom and then zoom in further, and eventually you will see the individual colored squares: those are pixels.

On a camera sensor, each pixel corresponds to a photosite — a tiny light-sensitive element (typically a photodiode) that measures the intensity of light hitting it during an exposure. After a photo is taken, the brightness values from all the photosites are combined and processed into the final image file you see on screen.

What Is a Megapixel?

A megapixel is simply one million pixels. A 12-megapixel sensor captures roughly 12 million individual light measurements and arranges them into a grid to form an image. A 108-megapixel sensor captures 108 million.

The resolution of the resulting image is described by its pixel dimensions. A 12MP photo might be 4032 × 3024 pixels (multiply those together and you get approximately 12 million). A 200MP photo might be 16384 × 12288 pixels.

Why Megapixels Matter — and Why They Don’t Tell the Whole Story

Higher megapixel counts offer genuine benefits in specific scenarios:

Large print capability. The more pixels in an image, the larger you can print it before it starts to look soft or pixelated. A 12MP photo prints beautifully up to about 16 × 20 inches at standard print resolution. A 50MP or 100MP photo can be used for billboard-sized prints.

Cropping flexibility. With a high-resolution image, you can crop aggressively and still retain a detailed, usable photo. Cropping a 200MP image to 25% of its area still leaves you with a 50MP image — more resolution than many cameras produce at full frame.

Detail in complex scenes. In a landscape with intricate foliage, a cityscape with fine architectural detail, or a group photo with many faces, more pixels capture more of that fine information.

However, megapixels are one of the most misleading numbers in camera marketing, because they say nothing about image quality. Two cameras can both shoot at 12MP, and one can produce photos that are dramatically sharper, cleaner, and more colorful than the other. The reason lies in what the megapixel count doesn’t tell you: the physical size of each pixel, the quality of the sensor, the lens, and the image processing pipeline.

Part Two: The Physics of Light Capture — Why Pixel Size Matters Enormously

How a Camera Sensor Captures Light

A digital camera sensor is a semiconductor chip covered in millions of photosites. Each photosite is covered by a color filter (in the standard Bayer array pattern, alternating red, green, and blue filters) and a micro-lens that focuses incoming light onto the photodiode below.

When a photon strikes the photodiode, it liberates an electron via the photoelectric effect. More photons = more electrons = a brighter signal. After the exposure, each photosite reports the number of electrons it collected, and this analog signal is converted to a digital value by the analog-to-digital converter (ADC). The processor then demosaics the Bayer pattern (inferring the full color at each location from the surrounding filter array) and applies sharpening, noise reduction, and other processing to produce the final image.

The Critical Concept: Full-Well Capacity

Each photosite has a maximum number of electrons it can hold before it “overflows” — this is called its full-well capacity. A larger photosite has more physical space to collect electrons, meaning it has a higher full-well capacity. In practical terms:

- A larger photosite collects more light during an exposure.

- More light means a stronger, more reliable signal.

- A stronger signal relative to the background electronic noise means a higher signal-to-noise ratio (SNR).

- A higher SNR means cleaner, less grainy images — especially in low light.

This is why pixel size is one of the most important — and consistently under-reported — specifications in camera hardware.

The Megapixel-Pixel Size Trade-Off

Here is the fundamental tension at the heart of the megapixel debate: for a given sensor size, increasing the number of pixels necessarily decreases the size of each pixel.

If you have a sensor that is 1cm × 1cm and you divide it into a 10×10 grid, each pixel is 1mm². Divide the same sensor into a 100×100 grid, and each pixel is now only 0.01mm². More pixels, smaller pixels, less light per pixel.

This is why comparing cameras purely by megapixel count is misleading. A 12MP phone with a large sensor and large pixels can massively outperform a 108MP phone with a tiny sensor and tiny pixels in low-light shooting, dynamic range, and color accuracy — even though the 108MP phone sounds more impressive on a spec sheet.

Pixel Size: The Numbers

Pixel size is typically measured in microns (µm) — millionths of a meter. To give you a sense of scale:

A human hair is approximately 70 µm in diameter. The photosites on a smartphone sensor range from about 0.56 µm (very small, in extremely high-resolution sensors) to over 2.0 µm (large, in sensors designed for low-light performance). A full-frame DSLR or mirrorless camera sensor might have pixels in the 4–8 µm range, which is part of why professional cameras handle low light so well.

Part Three: UltraPixel — What It Is and Where It Came From

The Origin of UltraPixel

UltraPixel is a proprietary camera technology and marketing term introduced by HTC with the HTC One (M7) smartphone in 2013. It was a deliberate counter-programming move against the industry’s megapixel race, which at the time was pushing smartphone cameras to 13MP, 16MP, and beyond.

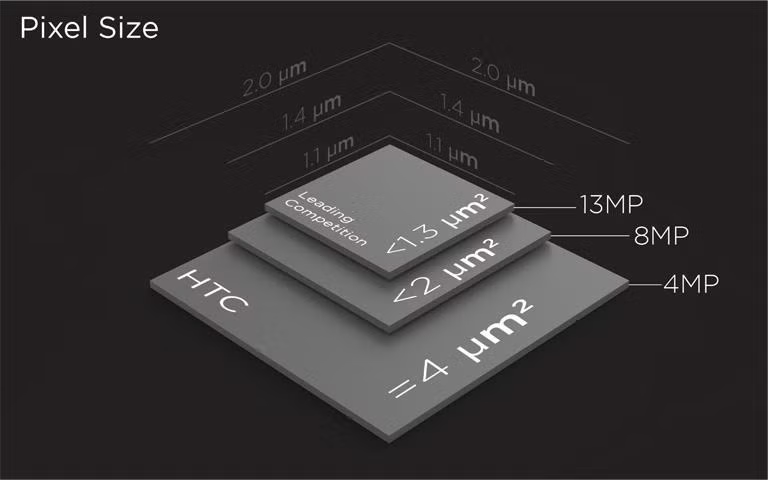

HTC’s core argument was simple and technically sound: instead of cramming more, smaller pixels onto a sensor, use fewer, larger pixels that each capture significantly more light. The HTC One M7’s camera had only 4 ultrapixels — that is, 4 megapixels — but each pixel was an enormous 2.0 µm in size, far larger than the ~1.1 µm pixels in competing 13MP cameras of the time.

The name “UltraPixel” was meant to communicate that each pixel was ultra-capable — ultra-sensitive to light — rather than that there were an ultra-large number of them.

The Technical Foundation of UltraPixel

UltraPixel technology was built around three core principles that HTC engineered into its sensor design:

Larger individual photosites (2.0 µm) gave each pixel roughly 300% more surface area to collect photons compared to the typical smartphone pixel of the era. This translated directly into better low-light sensitivity and less noise.

Backside-illuminated (BSI) sensor architecture moved the wiring circuitry to the back of the sensor rather than the front, allowing more of each photosite’s surface area to be exposed to incoming light rather than blocked by circuitry. BSI was still relatively new to smartphones at the time and contributed meaningfully to light capture efficiency.

An f/2.0 aperture lens — wider than most smartphone lenses of the era — allowed more total light into the camera, further boosting low-light capability.

HTC positioned UltraPixel as a photography-first, quality-first approach for the real conditions in which people actually take photos: parties, restaurants, concerts, indoor events — all dimly lit environments where a standard 13MP sensor with tiny pixels would struggle.

How UltraPixel Performed in Practice

The HTC One M7’s camera received genuinely strong reviews for low-light photography. In dim conditions, it produced cleaner, less noisy images than many competitors shooting at higher megapixel counts. The physics were sound.

However, UltraPixel had a significant real-world weakness: in bright light or when photos were viewed at large sizes, the 4MP resolution was painfully limiting. Printing large photos, cropping images, or simply displaying them on the increasingly high-resolution screens that were becoming standard revealed a lack of detail that consumers found frustrating. The very low megapixel count also made the marketing comparison to 13MP competitors look unfavorable regardless of quality arguments.

HTC faced a peculiar problem: they were technically correct that pixel quality matters more than pixel quantity in many situations, but they had swung the pendulum too far. 4MP was simply too low for many users’ legitimate needs.

UltraPixel’s Evolution

In subsequent HTC devices, UltraPixel evolved:

The HTC One M8 (2014) retained the UltraPixel sensor but added a second “Duo Camera” depth sensor for computational photography effects — an early entry into what would become the multi-camera era.

The HTC One M9 (2015) made a surprising reversal, moving to a 20MP main sensor — seemingly abandoning the UltraPixel philosophy under competitive pressure, despite the fact that the resulting camera received worse reviews in low light than its predecessor.

Later HTC devices used UltraPixel branding for front-facing cameras, where the low resolution was less objectionable (selfie cameras rarely need to be printed large) and the low-light improvement in indoor selfies was genuinely valued.

HTC’s market share collapsed through the mid-to-late 2010s for reasons extending far beyond camera technology, and UltraPixel largely faded from the spotlight along with the brand.

Part Four: The Industry’s Answer — Pixel Binning and Large-Sensor High-Resolution Design

The Problem HTC Identified Was Real

While HTC’s specific UltraPixel implementation had limitations, the underlying problem they were solving — that tiny pixels capture less light and produce noisier images — was real and remained relevant as the megapixel race continued. Samsung, Sony, Apple, and others had to develop their own solutions.

Pixel Binning: Having It Both Ways

The most influential answer the industry developed is pixel binning (also called multi-pixel merging or nona-binning). The idea is elegant: design a sensor with many small pixels, but give the camera software the ability to combine — or “bin” — adjacent pixels together into a single larger super-pixel when the lighting demands it.

In practice, a 108MP sensor with pixel binning might shoot at full 108MP in bright daylight (maximizing resolution and detail) but automatically combine groups of 9 pixels (a 3×3 grid) into a single super-pixel in low light, effectively producing a 12MP image where each pixel is 9× the size of a single native pixel. The combined pixel behaves much like a physically larger pixel — capturing more total light and producing a cleaner signal.

Samsung pioneered this approach with its ISOCELL sensor technology, introducing the 108MP ISOCELL HM1 (used in the Galaxy S20 Ultra) with 9-in-1 binning. More recent generations use nona-pixel and dual-pixel technologies for increasingly sophisticated light management. Sony’s QUAD-BAYER array is another implementation of the same concept, used in many Android flagships and some of Apple’s sensor designs.

The trade-off with pixel binning is that the full-resolution mode and the binned mode represent very different images. In extreme low light, the full-resolution 108MP mode can look worse than the 12MP binned mode. Some cameras handle this transition seamlessly; others produce inconsistent results.

Apple’s Approach: Larger Sensors with Larger Pixels

Apple has generally pursued a different philosophy: rather than dramatically increasing megapixel count, Apple focused on growing the physical size of its sensors and the size of each pixel, while investing heavily in computational photography and image signal processing.

The iPhone camera lineup has used relatively modest megapixel counts (12MP for most of its main sensor history until moving to 48MP with the iPhone 14 Pro) while featuring large pixels and sophisticated multi-frame processing. The iPhone 14 Pro’s main sensor introduced a 48MP sensor with pixel binning to a 12MP output by default, unlocking full 48MP capture in ProRAW mode.

Google’s Computational Photography Approach

Google’s Pixel phones took yet another philosophy: use a high-quality but modestly-specced sensor and compensate with extraordinary software and AI. Google’s Night Sight mode, HDR+ processing, and more recently Magic Eraser and Real Tone demonstrate that computational photography can overcome hardware limitations — producing images from a 12MP sensor that rival or beat many hardware-spec-superior competitors.

Part Five: Direct Comparison — UltraPixel Philosophy vs. Megapixel Philosophy

The Core Trade-Off Summarized

| Dimension | High Megapixel Approach | UltraPixel / Large Pixel Approach |

|---|---|---|

| Resolution | Very high — great for cropping and printing | Lower — limited for large prints or heavy cropping |

| Low-Light Performance | Weaker per pixel (smaller pixels = less light) | Stronger — larger pixels capture more photons |

| Noise | More noise in low light (smaller pixels) | Less noise — better signal-to-noise ratio |

| Dynamic Range | Can be lower (pixels saturate easily) | Higher — larger pixels have more full-well capacity |

| Daylight Performance | Excellent — fine detail resolved | Good, but less resolving power |

| File Size | Very large files | Smaller files |

| Computational Cost | High — processing 200MP images demands power | Lower |

| Zooming/Cropping Flexibility | High — lots of pixels to work with | Limited |

When High Megapixels Win

High megapixel cameras have a genuine advantage when:

You regularly shoot in bright, well-lit conditions where tiny pixels are not starved of light. You need to make large prints — poster-size or billboard-size. You frequently crop your images heavily to reframe or zoom in after the fact. You are shooting landscapes, architecture, or studio photography where fine detail is paramount. You want the flexibility to downscale a high-resolution image for different outputs while retaining options.

When Large Pixels (UltraPixel Philosophy) Win

The large-pixel, fewer-pixel approach wins when:

You shoot primarily indoors, at night, or in unpredictable mixed lighting. You prioritize clean, low-noise images with natural color over fine textural detail. You are shooting fast-moving subjects where longer exposures (compensating for less light in tiny pixels) would cause blur. You are using a front-facing camera for video calls and selfies where print size is never a concern. You value consistent, reliable quality across a wide range of lighting conditions over peak performance only in ideal light.

Part Six: Modern Sensor Technologies Beyond the Megapixel/Pixel-Size Binary

Sensor Size: The Most Important Specification Nobody Talks About

The pixel-size-vs-count debate assumes a fixed sensor size, but the overall physical size of the sensor is arguably more important than either variable. A larger sensor can accommodate both high megapixel counts AND large pixels simultaneously.

This is why full-frame mirrorless cameras (sensor size: 36mm × 24mm) can have 60MP sensors with 4+ µm pixels, while a smartphone with a sensor measuring roughly 9mm × 7mm must choose between resolution and pixel size.

Smartphone sensor sizes are typically described using an archaic “optical format” notation like “1/1.28-inch” or “1/2.55-inch” — these numbers are confusing and do not represent the actual physical dimensions. The key point is that bigger sensors (lower denominator in the fraction, or sensors described in larger absolute mm dimensions) are better, all else being equal.

Stacked CMOS and DRAM Integration

Modern flagship smartphone sensors use stacked CMOS architecture, where the photodiode layer, logic processing layer, and (in some designs) a DRAM memory layer are physically stacked on top of each other. This dramatically increases the speed of data readout from the sensor, enabling features like fast electronic shutters without rolling shutter distortion, high-speed video at 4K/120fps or 8K/30fps, and extremely fast burst photography.

Dual-Pixel and Multi-Pixel Autofocus

Many modern sensors use dedicated autofocus pixels built into the sensor itself. Dual-pixel autofocus (pioneered by Samsung and adopted by Canon in DSLRs) splits each photosite into two halves, each receiving light from slightly different angles, allowing the camera to use phase-detection autofocus across the entire frame simultaneously. This produces fast, accurate focusing even in low light and is one reason modern smartphone cameras focus so quickly.

Optical Image Stabilization (OIS)

OIS uses tiny gyroscopes and actuators to physically shift the sensor or lens element to counteract hand shake during exposure. This allows longer exposure times without motion blur — effectively extending the camera’s low-light capability by 2–4 stops. Combined with large pixels, OIS is one of the most impactful hardware features for low-light photography and video stabilization.

Part Seven: What Actually Makes a Great Smartphone Camera in 2024 and Beyond

Understanding the full picture, here is what to actually look for when evaluating a smartphone camera — beyond just the megapixel number:

Sensor size — larger is better. Look for sensors described as 1/1.28-inch or larger on flagships. A bigger sensor is the rising tide that lifts all boats.

Pixel size — look for 1.0 µm or larger on the main camera. The best flagships push 1.4–2.0 µm even at high resolutions, achieved by using larger sensors.

Pixel binning implementation — does the camera intelligently switch between full-resolution and binned modes? How well does it handle the transition?

Aperture — wider apertures (lower f-numbers like f/1.5 or f/1.8) admit more light. Combined with large pixels, a wide aperture maximizes low-light capability.

OIS quality — 5-axis OIS is becoming standard on flagships and makes a significant difference for handheld low-light shooting and video.

Computational photography and AI processing — the software pipeline matters enormously. Google, Apple, and Samsung have invested billions in image signal processors (ISPs) and AI models for scene recognition, HDR merging, noise reduction, and color science. A well-tuned ISP can make a modest sensor perform remarkably well.

The lens system — optical quality, focal length coverage (ultrawide, main, telephoto, periscope telephoto), and optical zoom range are as important as the sensor specifications.

Video capabilities — 4K/60fps, 8K, log profile support, stabilization quality, and audio capture are increasingly important as smartphones become primary video production tools.

Conclusion: Two Philosophies, One Goal

The megapixel vs. UltraPixel debate is ultimately a conversation about how to make the best photograph possible within the physical constraints of a smartphone-sized device.

The megapixel approach says: capture maximum detail, and let computational intelligence (pixel binning, AI noise reduction, multi-frame HDR) deal with the challenges of small pixels. The UltraPixel philosophy says: start with the best possible light capture at the hardware level, because no software can fully recover photons that were never collected.

Both are valid engineering philosophies. The smartphone industry has converged on a synthesis: use large sensors that allow high-resolution sensors with meaningfully sized pixels, augment with pixel binning for flexible lighting adaptation, and layer sophisticated computational photography on top of it all.

HTC’s UltraPixel was ahead of its time in identifying the right problem, and perhaps too aggressive in its solution. The legacy of that thinking lives on in every modern smartphone sensor that uses pixel binning, every camera spec sheet that now includes pixel size alongside megapixel count, and every camera review that acknowledges that more megapixels doesn’t always mean better photos.

The next time you see a 200MP camera headline, ask the more interesting question: how big are those pixels, how large is the sensor, and how does it perform when the lights go down? That is where the real story lives.