Cloud Computing Explained in Detail: The Technology Powering the Modern Digital World

When you stream a movie on Netflix, back up your phone photos to iCloud, send an email through Gmail, or ask your company’s CRM software to pull up a customer record, you are using cloud computing. It underpins virtually everything we do online today. Yet for all its ubiquity, “the cloud” remains a vague concept to most people — something that happens “out there,” somewhere on the internet.

This guide pulls back the curtain. We will explore what cloud computing actually is, how it physically and technically works, the different service and deployment models, the major providers and their offerings, security considerations, real-world use cases, and where the technology is headed next.

What Is Cloud Computing?

Cloud computing is the delivery of computing resources — servers, storage, databases, networking, software, analytics, artificial intelligence, and more — over the internet on a pay-as-you-go basis. Instead of buying and maintaining physical servers and data centers yourself, you rent access to those resources from a cloud provider who manages them for you.

The word “cloud” is simply a metaphor drawn from the way network diagrams have traditionally depicted the internet: as a fluffy, ambiguous cloud shape representing the complex infrastructure you didn’t need to worry about. In practice, “the cloud” is a massive global network of physical data centers — buildings filled with hundreds of thousands of servers, storage arrays, networking equipment, cooling systems, and power infrastructure — owned and operated by cloud providers and connected by high-speed fiber-optic cables.

The defining characteristics of cloud computing, as identified by the U.S. National Institute of Standards and Technology (NIST), are five:

On-demand self-service — users can provision computing resources automatically, without requiring human interaction with each service provider.

Broad network access — capabilities are accessible over the network through standard mechanisms from a wide variety of devices.

Resource pooling — the provider’s computing resources are pooled to serve multiple consumers, with resources dynamically assigned and reassigned according to demand. Users generally have no control over or knowledge of the exact location of the provided resources.

Rapid elasticity — capabilities can be elastically provisioned and released to scale rapidly outward and inward with demand.

Measured service — resource usage is monitored, controlled, and reported, providing transparency for both the provider and consumer. You pay for what you use.

A Brief History

The conceptual roots of cloud computing stretch back to the 1960s, when computing pioneer John McCarthy suggested that “computation may someday be organized as a public utility.” In the 1990s, telecommunications companies began offering virtualized private network services, which let them use shared physical infrastructure to serve multiple customers — a precursor to modern cloud concepts.

The modern era began in 2002 when Amazon launched Amazon Web Services (AWS) as an internal infrastructure platform, and then opened it to external developers in 2006 with the launch of S3 (cloud storage) and EC2 (virtual servers). The timing was fortuitous: the internet was maturing, broadband was spreading, and startups desperately needed a way to access scalable infrastructure without massive upfront capital investment. AWS gave them exactly that.

Google launched its App Engine platform in 2008. Microsoft followed with Azure in 2010. By the early 2010s, cloud computing had shifted from a niche concept to the dominant infrastructure paradigm for new software development. Today, global cloud spending exceeds $600 billion annually and continues to grow at double-digit rates.

How Cloud Computing Works: The Physical and Technical Reality

Data Centers

The foundation of every cloud is physical. Data centers are enormous, highly engineered facilities that house the computing infrastructure. A hyperscale data center — the kind operated by AWS, Microsoft, or Google — can span hundreds of thousands of square feet and contain tens of thousands of servers. They require industrial-scale power supplies (often measured in megawatts), sophisticated cooling systems (because servers generate enormous heat), redundant network connections (multiple fiber paths to ensure connectivity even if one is cut), and tight physical security.

Cloud providers operate dozens or hundreds of such facilities distributed across the globe, organized into geographic regions — a cluster of data centers in a specific geographic area — and availability zones — isolated locations within a region that have independent power, cooling, and networking. This geographic distribution serves two purposes: it puts resources physically close to users to minimize latency, and it enables redundancy so that a power outage or natural disaster in one location doesn’t take down your service.

Virtualization: The Core Enabling Technology

What makes cloud computing economically viable is virtualization. A physical server is a fixed piece of hardware, but virtualization software — a hypervisor — allows that single physical machine to run multiple isolated virtual machines (VMs) simultaneously, each behaving as if it were a dedicated computer with its own operating system, CPU allocation, memory, and storage.

This is transformative for resource efficiency. Instead of each customer requiring their own dedicated server (which might sit idle at 5% utilization most of the time), a cloud provider can run dozens of customers’ virtual machines on the same physical hardware, dynamically shifting resources based on demand. The customers never know or need to care about the underlying hardware — they simply interact with their VM.

The most widely used hypervisors include VMware’s ESXi, Microsoft’s Hyper-V, and the open-source KVM. AWS developed its own custom hypervisor called Nitro, which offloads virtualization functions to dedicated hardware to minimize overhead and improve performance.

Containerization has emerged alongside virtualization as a complementary approach. Containers, popularized by Docker and orchestrated by Kubernetes, package an application and its dependencies into a lightweight, portable unit. Unlike VMs (which each run a full OS), multiple containers share the same operating system kernel, making them far more lightweight and faster to start. This makes containers ideal for microservices architectures where applications are decomposed into dozens of small, independently deployable services.

Networking in the Cloud

Cloud providers operate some of the largest private networks on earth. AWS’s global network backbone, for instance, carries a significant fraction of all internet traffic. Within a cloud environment, customers create Virtual Private Clouds (VPCs) — isolated, logically defined networks within the broader cloud infrastructure. Within a VPC, customers define subnets, routing rules, firewalls (called security groups or network ACLs), and connectivity to the internet or to their own on-premises networks via encrypted VPN or dedicated fiber connections.

Load balancers distribute incoming traffic across multiple server instances to prevent any single machine from becoming a bottleneck. Content Delivery Networks (CDNs) — like AWS CloudFront or Cloudflare — cache copies of static content at edge locations distributed around the world, so that a user in Tokyo retrieving your website’s images doesn’t have to wait for a response from a data center in Virginia.

Storage in the Cloud

Cloud storage comes in fundamentally different types, each suited to different needs. Object storage (like AWS S3 or Google Cloud Storage) stores data as flat objects — files of any type, any size — accessed via a simple API. It is infinitely scalable, durable, and cheap, making it ideal for backups, media files, data lakes, and static website assets. Block storage (like AWS EBS) works like a hard drive attached to a virtual machine — addressable in fixed-size blocks, suitable for databases and operating system volumes. File storage (like AWS EFS or Azure Files) provides a shared file system that multiple machines can mount simultaneously, like a network drive.

Data durability is achieved through redundancy: cloud providers typically replicate your data across multiple physical drives, servers, and availability zones automatically. AWS S3, for example, is designed for 99.999999999% (eleven nines) annual durability — meaning that if you stored 10 million files, you could statistically expect to lose one in 10,000 years.

Cloud Service Models: IaaS, PaaS, SaaS, and Beyond

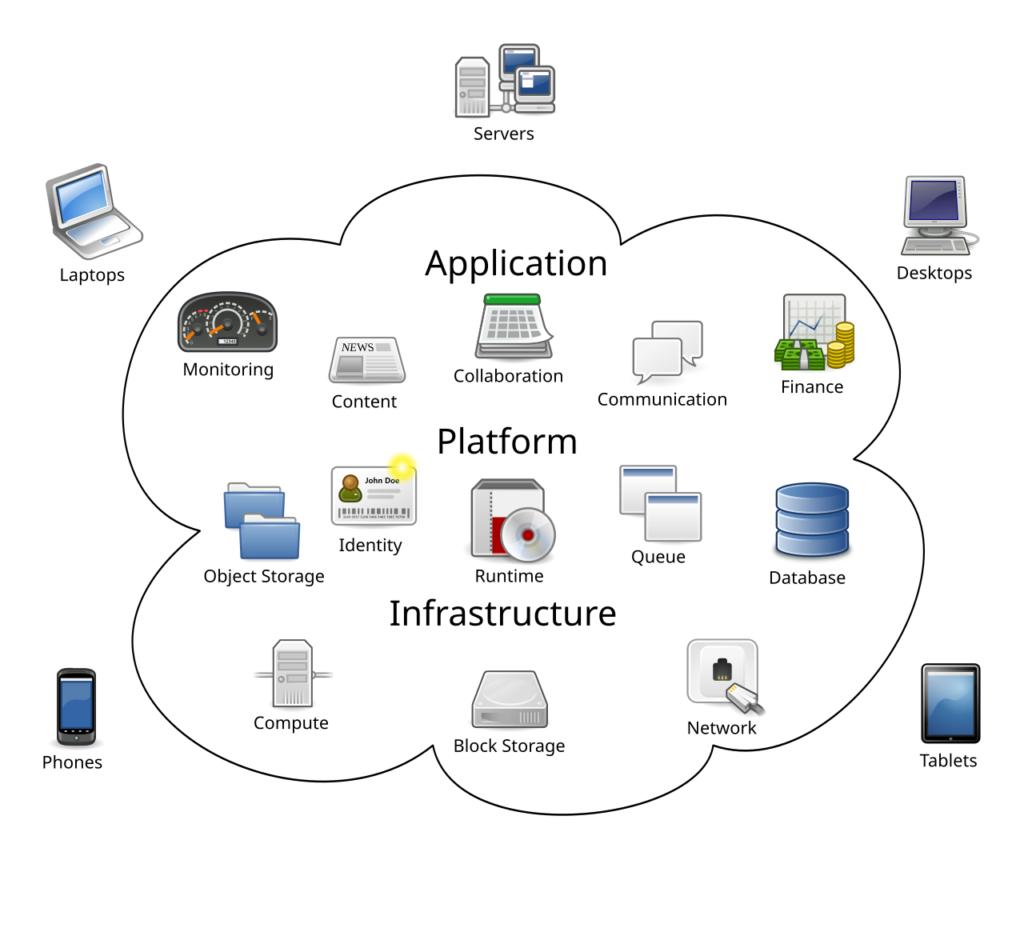

Cloud offerings are traditionally categorized into three service models, defined by how much of the underlying stack the provider manages versus how much the customer controls.

Infrastructure as a Service (IaaS)

IaaS gives you the raw building blocks of computing: virtual machines, storage, networking, and sometimes bare-metal servers. You are responsible for everything from the operating system up — patching the OS, installing software, configuring security, managing scaling. The cloud provider manages the physical hardware, the data center, and the hypervisor.

IaaS is the most flexible model and gives the most control. It is the right choice when you need to run custom software, have specific OS requirements, or are migrating existing (“lift-and-shift”) workloads to the cloud. AWS EC2, Google Compute Engine, and Azure Virtual Machines are the flagship IaaS products.

Platform as a Service (PaaS)

PaaS abstracts away the operating system and infrastructure management, giving developers a platform on which to build, run, and manage applications. You deploy your code; the platform handles the runtime environment, OS patching, scaling, load balancing, and availability. You focus on your application logic, not the plumbing beneath it.

Examples include AWS Elastic Beanstalk, Google App Engine, Heroku, and Azure App Service. PaaS is popular for web application development, APIs, and backend services where developer productivity is prioritized over infrastructure control.

Software as a Service (SaaS)

SaaS delivers fully operational software applications over the internet. The provider manages everything: infrastructure, platform, application code, databases, updates, and security. The customer simply uses the software through a browser or app. There is nothing to install, patch, or host.

The SaaS model has fundamentally transformed the enterprise software industry. Salesforce (CRM), Microsoft 365 (productivity), Slack (communication), Zoom (video conferencing), Dropbox (file storage), and Shopify (e-commerce) are all SaaS products. The global SaaS market exceeds $200 billion annually, with hundreds of thousands of SaaS products now available.

Emerging Service Models

Beyond the classic triad, several newer models have emerged:

Function as a Service (FaaS) / Serverless Computing allows developers to deploy individual functions of code that execute in response to events, without provisioning or managing any servers at all. AWS Lambda, Google Cloud Functions, and Azure Functions are the leading examples. You write a function; the cloud runs it when triggered and charges you only for the milliseconds of execution time. Serverless architectures are highly cost-effective for irregular or event-driven workloads.

Database as a Service (DBaaS) provides fully managed database engines — relational, NoSQL, in-memory, time-series, and more — without the need to manage the underlying servers or perform routine maintenance like backups, patching, or failover. AWS RDS, Google Cloud Spanner, and Azure Cosmos DB are prominent examples.

AI and Machine Learning as a Service makes powerful AI capabilities accessible via API. Rather than training your own large language model or computer vision system, you call an API endpoint and pass it your data. AWS SageMaker, Google Vertex AI, and Azure Machine Learning provide both the tools to train custom models and pre-trained models you can use directly.

Cloud Deployment Models

Beyond service models, cloud environments are also categorized by who owns and accesses them.

Public Cloud

Public cloud infrastructure is owned and operated by a third-party provider and shared among many customers (though each customer’s data and resources are logically isolated). It offers the greatest economies of scale, the broadest range of services, and the most flexibility. For most startups, small businesses, and digital-native organizations, public cloud is the default choice.

Private Cloud

A private cloud is cloud infrastructure operated exclusively for a single organization, either hosted on-premises in the organization’s own data centers or hosted by a third-party provider in a dedicated environment. It offers greater control, customization, and data sovereignty, but requires significant capital investment and operational expertise. Governments, financial institutions, and healthcare organizations with strict regulatory requirements often run private clouds.

Hybrid Cloud

Hybrid cloud combines public and private cloud environments, allowing data and applications to move between them. An organization might keep sensitive customer data and legacy applications on-premises in a private cloud while running public-facing web applications and analytics workloads on public cloud infrastructure. Hybrid cloud strategies are extremely common in large enterprises undergoing gradual digital transformation.

Multi-Cloud

Multi-cloud refers to the use of cloud services from more than one public cloud provider. An organization might use AWS for compute and storage, Google Cloud for machine learning and data analytics, and Azure for Microsoft 365 integration and Active Directory. Multi-cloud strategies reduce vendor lock-in, allow organizations to choose the best-in-class service for each workload, and provide geographic redundancy.

The Major Cloud Providers

Amazon Web Services (AWS)

AWS is the clear market leader with roughly 30–33% of the global cloud infrastructure market. Launched in 2006, it has the broadest and deepest catalog of services — over 200 at last count — covering compute, storage, networking, databases, machine learning, analytics, IoT, security, and developer tools. AWS has the largest global infrastructure footprint with regions on every inhabited continent.

Microsoft Azure

Azure is the second-largest provider, with approximately 20–23% market share, and is growing rapidly. Its greatest strength is its tight integration with the Microsoft ecosystem — Windows Server, Active Directory, SQL Server, Teams, and Microsoft 365. This makes Azure the natural choice for enterprises already heavily invested in Microsoft software. Azure also has strong hybrid cloud capabilities through Azure Arc and Azure Stack.

Google Cloud Platform (GCP)

GCP holds roughly 10–12% of the market and is distinguished by its data analytics and machine learning capabilities (reflecting Google’s core strengths), its globally distributed network infrastructure, and its Kubernetes expertise (Kubernetes originated at Google). GCP is the preferred platform for data-heavy workloads, AI/ML development, and organizations wanting the best-in-class data warehouse in BigQuery.

Other Notable Providers

Alibaba Cloud dominates the Chinese market and is growing across Asia-Pacific. IBM Cloud focuses on hybrid cloud and enterprise workloads with its acquisition of Red Hat (OpenShift). Oracle Cloud has a strong foothold in organizations running Oracle databases. Cloudflare, though not a full cloud provider, has become a major player in edge computing and network security services.

Cloud Security: Shared Responsibility and Best Practices

Security in the cloud operates under a shared responsibility model. The cloud provider is responsible for the security of the cloud — the physical infrastructure, hardware, networking, and the hypervisor layer. The customer is responsible for security in the cloud — their operating system configurations, application code, identity and access management, data encryption, and network controls.

This division is critical to understand. Moving to the cloud does not make you more or less secure by default — it shifts which security responsibilities you own. Many of the most publicized cloud security breaches (exposed S3 buckets containing sensitive data, for example) resulted from customer misconfiguration, not provider failures.

Key security practices in cloud environments include:

Identity and Access Management (IAM) is the foundation. Enforce the principle of least privilege — give every user and service account only the minimum permissions necessary to do their job. Use multi-factor authentication for all console and API access.

Encryption should be applied both at rest (data stored in databases and object storage) and in transit (data moving over networks via TLS). Most cloud providers offer managed key management services to simplify encryption key lifecycle management.

Network Security involves carefully designing VPC architectures, using security groups to restrict inbound and outbound traffic to only necessary ports and IP ranges, and placing sensitive resources in private subnets inaccessible from the internet.

Logging and Monitoring through services like AWS CloudTrail (API activity logging), AWS GuardDuty (threat detection), and Azure Sentinel (SIEM) enables rapid detection and response to suspicious activity.

Compliance frameworks — SOC 2, ISO 27001, HIPAA, PCI-DSS, GDPR — have cloud-specific implementation guidance, and major providers maintain compliance certifications that customers can inherit for their deployments.

Economics of Cloud Computing

The economic proposition of cloud computing rests on several pillars.

Capital expenditure vs. operating expenditure is the most fundamental shift. Traditional IT required large upfront investments in hardware that depreciated over time. Cloud converts that to a variable operating expense aligned with actual usage. For startups and growing companies, this is transformative — you do not need to raise capital to buy servers before you know whether your product will succeed.

Economies of scale allow cloud providers to buy hardware, power, and cooling at volumes that individual organizations can never match, and they pass these savings on. The cost of cloud compute has fallen dramatically year over year — AWS has cut EC2 prices over 100 times since launch.

Elasticity means you pay only for what you use, and you can scale instantly. A retail company can handle 10x traffic during the holiday season without permanently carrying that capacity during slow months. Before cloud, they had to either over-provision (wasting money) or under-provision (crashing under load).

Total Cost of Ownership (TCO) comparisons between on-premises and cloud are more nuanced than pure compute costs. When you factor in data center real estate, hardware maintenance, power, cooling, networking, IT staff salaries, and the opportunity cost of time spent managing infrastructure, cloud often delivers significant net savings — especially for organizations without dedicated infrastructure teams.

That said, cloud costs can spiral unexpectedly if not carefully managed. The discipline of FinOps (cloud financial operations) has emerged to help organizations monitor, optimize, and govern their cloud spending through practices like rightsizing instances, using reserved capacity for predictable workloads, and identifying and eliminating idle resources.

Real-World Use Cases

Startups and Digital Natives use cloud as their entire infrastructure from day one, allowing them to compete with established players without the capital burden of building data centers. Airbnb, Uber, Spotify, and Netflix all built their global platforms entirely on cloud infrastructure.

Enterprise Digital Transformation involves large organizations migrating legacy applications from on-premises data centers to the cloud to reduce costs, improve agility, and access modern services. This is the dominant growth driver for all major providers today.

Big Data and Analytics workloads — processing and analyzing petabytes of data from web logs, IoT sensors, transaction records, and social media — are natural fits for cloud due to the elastic compute needed and the tight integration with managed data warehousing (Snowflake, BigQuery, Redshift) and analytics tools.

Disaster Recovery and Business Continuity — organizations replicate critical systems to the cloud as a cost-effective failover target, drastically reducing the recovery time objective (RTO) and recovery point objective (RPO) compared to traditional DR approaches.

AI and Machine Learning model training requires enormous, temporary bursts of GPU compute — exactly the kind of workload cloud handles elegantly. Researchers and data scientists can spin up a cluster of 100 GPU instances, train a model over a weekend, and shut them all down, paying only for those hours of use.

Edge Computing and IoT is an emerging use case where cloud intelligence is pushed out to devices and edge locations closer to where data is generated — factory floors, retail stores, autonomous vehicles — to reduce latency and bandwidth costs while maintaining central management and analytics in the core cloud.

Challenges and Criticisms

Vendor Lock-In is a genuine concern. Once you deeply integrate with a cloud provider’s proprietary services — their managed databases, their serverless platform, their machine learning tools — migrating away becomes costly and complex. Containerization and open-source tooling mitigate this, but it remains a real strategic consideration.

Latency — the time for a network round trip to a data center — is an inherent limitation for applications requiring real-time responses, such as industrial control systems, augmented reality, and autonomous vehicles. Edge computing strategies address this, but the physics of network propagation cannot be entirely overcome.

Data Privacy and Sovereignty concerns arise when regulations (such as GDPR in Europe) require that certain data remain within specific geographic boundaries, or when organizations are uncomfortable with the idea of their sensitive data residing on shared infrastructure. Private cloud and regional data residency options from major providers help address this.

Outages — even the largest cloud providers experience occasional incidents. AWS, Azure, and GCP have all suffered notable multi-hour outages in major regions that cascaded into widespread internet disruption, underlining the concentration risk created by global dependence on a few providers.

Environmental Impact is increasingly scrutinized. Data centers consume vast amounts of electricity. However, hyperscale providers argue — with supporting evidence — that the efficiency gains of cloud consolidation, combined with their substantial investments in renewable energy, result in a better carbon footprint than the fragmented, inefficient on-premises data centers they replace.

The Future of Cloud Computing

Serverless and abstraction will continue to advance, pushing developers further from infrastructure concerns and allowing them to focus entirely on application logic. The “right level of abstraction” will rise for most use cases.

Edge and 5G convergence will bring cloud-grade computing power to the network edge — cell towers, base stations, retail locations, and factories — enabling latency-sensitive applications that cannot tolerate round trips to central data centers. AWS Wavelength, Azure Edge Zones, and Google Distributed Cloud are early expressions of this trend.

AI-native cloud is emerging as AI services become first-class citizens in every cloud platform, embedded into storage, databases, analytics, security, and developer tools. The race to offer the best-integrated AI infrastructure is now the fiercest battleground among the major providers.

Quantum Computing as a Service is on the horizon. IBM, Google, AWS (through Amazon Braket), and Azure (through Azure Quantum) are already offering access to early quantum processors via cloud APIs, allowing researchers and enterprises to experiment with quantum algorithms without building their own quantum hardware.

Sustainability will become a differentiating factor as organizations increasingly assess the carbon footprint of their cloud usage. Providers are committing to 100% renewable energy and carbon neutrality, and new tools for measuring and optimizing the environmental impact of cloud workloads are emerging.

Conclusion

Cloud computing is not a single technology — it is an architectural and economic paradigm shift in how computing resources are provisioned, consumed, and paid for. It rests on physical data centers, virtualization, software-defined networking, and distributed systems engineering. It expresses itself through IaaS, PaaS, SaaS, serverless, and managed services. It is delivered by a handful of hyperscale providers with global infrastructure footprints, and it underpins nearly every digital experience in the modern world.

Understanding cloud computing — not just as a buzzword but as a technical and economic system — is increasingly a baseline competency for engineers, architects, business leaders, and policymakers alike. Whether you are building a startup, transforming a legacy enterprise, designing a new application, or evaluating where your data lives and who is responsible for protecting it, the cloud is the terrain on which these decisions now play out.

The shift to cloud is not a destination. It is a continuously evolving landscape — and the organizations and individuals who understand it deeply will be best positioned to thrive within it.