Iris Scanning Technology Explained in Detail – A Complete Guide to How Your Eyes Unlock the World

Published: February 2026 | Biometrics, Security & Technology

Introduction

Of all the biometric identifiers available to science and security — fingerprints, voice, face, gait, DNA — the human iris stands apart as perhaps the most uniquely powerful. It is stable from about one year of age until death, virtually impossible to forge, scannable at a distance without physical contact, and statistically more unique than a fingerprint by several orders of magnitude.

Iris scanning technology has quietly moved from science fiction and high-security government installations into everyday life. It is now found in smartphones, international border crossings, hospitals, banking systems, and even supermarket checkout lanes. Yet despite its growing ubiquity, most people have little idea how it actually works.

In this comprehensive guide, we will explore the biology of the iris, the physics and engineering behind iris scanners, the sophisticated algorithms that decode and match iris patterns, the security architecture protecting iris data, and the emerging applications and challenges shaping the future of this remarkable technology.

What Is the Iris? The Biology Behind the Biometric

The iris is the colored ring of tissue surrounding the pupil of the eye. Its primary biological function is to regulate the amount of light entering the eye by controlling the size of the pupil — dilating in low light and contracting in bright light. But it is the iris’s extraordinary structural complexity that makes it so valuable as a biometric identifier.

The Anatomy of the Iris

The iris is a thin, circular diaphragm composed of two layers: the anterior border layer on the front surface and the stroma, a fibrous connective tissue layer beneath it. Within the stroma lies a dense and intricate landscape of biological features:

- Crypts: Deep pits or depressions scattered across the iris surface.

- Furrows: Concentric contraction furrows that form circular grooves.

- Pigment spots (nevi): Concentrated deposits of melanin pigment.

- Collarette: A zigzag ring structure that separates the inner pupillary zone from the outer ciliary zone — considered the most distinctive feature region.

- Radial fibers: Fibrous strands radiating outward from the pupil.

- Vascular patterns: The network of tiny blood vessels within the iris.

- Crypts of Fuchs: Small, petal-shaped pits near the collarette region.

These features develop through a process that is partly genetic and partly the result of random epigenetic events during fetal development. The result is that even identical twins — who share the same DNA — have completely different iris patterns. More remarkably, the left and right irises of the same person are also entirely different.

Why the Iris Is the Gold Standard in Biometrics

The iris has several properties that make it uniquely suited for biometric identification:

- Uniqueness: The probability of two irises producing the same IrisCode (the standard iris representation) is approximately 1 in 10^78 — essentially zero for practical purposes.

- Stability: Unlike fingerprints, which can be worn down by physical labor or skin conditions, the iris pattern remains virtually unchanged from about 12 months of age until death.

- Internal and protected: The iris is a protected internal organ, shielded behind the cornea. It cannot be easily damaged or altered without significant trauma to the eye.

- Non-contact: Iris scanning requires no physical touch, reducing hygiene concerns and making high-throughput scanning practical.

- Liveness: The pupil’s dynamic response to light provides a natural, built-in liveness check — a live eye responds in ways a photograph cannot replicate.

Key Fact: A human iris contains approximately 266 measurable degrees of freedom — compared to about 13–60 for a fingerprint. This extraordinary information density is what makes iris recognition so uniquely accurate.

A Brief History of Iris Recognition Technology

The idea that the iris could be used for personal identification was first proposed in 1936 by ophthalmologist Frank Burch, who suggested that the patterns of the iris could serve as a unique identifier. The concept remained theoretical for decades.

In 1987, ophthalmologists Leonard Flom and Aran Safir patented the concept of using iris patterns for automated identification. The patent described the iris as an “optical fingerprint” but did not specify a practical algorithm for doing so.

The critical breakthrough came in 1993 when computer scientist and cryptographer John Daugman, working at Cambridge University, developed the first working algorithm for iris recognition. His algorithm — based on Gabor wavelets, phase encoding, and Hamming distance matching — became the foundation of virtually every commercial iris recognition system deployed over the next three decades. Daugman’s work remains the dominant paradigm in the field.

By the late 1990s and 2000s, iris recognition systems were deployed in high-security environments including nuclear power plants, immigration control (the UAE began using iris for border control in 2001), and correctional facilities. Samsung introduced the first smartphone with an iris scanner — the Galaxy Note 7 — in 2016, bringing the technology to mass consumer markets.

How Iris Scanning Works: Step by Step

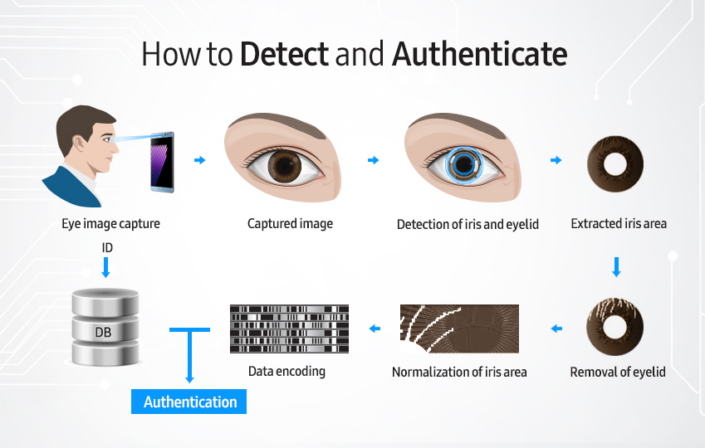

The iris recognition process can be broken down into five core stages: illumination and image capture, iris detection and segmentation, normalization, feature extraction, and template matching. Each stage involves sophisticated engineering and algorithms working in concert.

Stage 1: Illumination and Image Capture

The first challenge in iris recognition is capturing a high-resolution, well-focused image of the iris in a way that reveals its fine texture — even in varying ambient light conditions and even when the iris is highly pigmented (dark brown or black irises present more challenge than lighter ones).

Near-Infrared (NIR) Illumination

The cornerstone of iris imaging is near-infrared light, typically in the 700–900 nanometer wavelength range — just beyond the visible spectrum. NIR illumination is used for several critical reasons:

- It reveals texture in dark irises that is invisible under visible white light. Melanin — the pigment that makes irises brown or black — strongly absorbs visible light but is relatively transparent to NIR wavelengths, allowing the underlying tissue patterns to be imaged.

- It avoids the glare artifacts caused by visible light reflecting off the corneal surface.

- It is invisible and non-disturbing to the subject, making it comfortable for repeated use.

- It causes minimal pupil constriction, preserving the iris texture area.

The NIR light source consists of LEDs emitting in the 800–850 nm range, arranged to provide uniform, diffuse illumination of the eye. The camera sensor must be sensitive to NIR wavelengths — standard visible-light CMOS sensors are modified or replaced with NIR-sensitive variants.

Camera and Optics

The camera must capture sufficient detail to extract the fine texture of the iris. Typical requirements include a minimum resolution of 200 pixels across the iris diameter, though high-end systems achieve 400+ pixels for greater accuracy. The image must be in sharp focus, which requires precise autofocus mechanisms when the subject’s distance from the camera varies.

Long-range iris scanners — used in airports and border control — can capture iris images at distances of 1–3 meters or more using telephoto lenses. Some military and research systems have demonstrated capture at distances exceeding 10 meters. At the consumer end, smartphone iris cameras typically operate at 20–40 cm.

Dealing with Challenging Conditions

Real-world iris capture must handle a range of challenging conditions: glasses (which cause reflections), contact lenses (which may distort the iris texture), oblique viewing angles, heavy eyelid occlusion, motion blur, poor focus, and bright ambient sunlight competing with the NIR illumination. Modern systems use multiple frames captured in rapid succession, selecting the sharpest and best-exposed image for processing.

Engineering Note: High-end iris scanners capture 30 frames per second and algorithmically select the optimal frame based on focus score, iris visibility, pupil shape regularity, and NIR reflection quality.

Stage 2: Iris Detection and Segmentation

Once a suitable image is captured, the system must precisely locate and isolate the iris region — separating it from the pupil (the dark central opening), the surrounding white sclera, the eyelids, and eyelashes.

Pupil and Iris Boundary Detection

The process begins by locating the pupil — the darkest circular region in the NIR image. Because the pupil absorbs nearly all light at NIR wavelengths, it appears as a very dark, roughly circular area. Algorithms use thresholding and circular Hough transforms to precisely locate and fit a circle to the pupil boundary.

The outer iris boundary (the iris-sclera limbus) is then detected. This boundary is typically less well-defined than the pupil-iris boundary, as the transition from iris to sclera is gradual. Active contour models (snakes) and integro-differential operators — as developed by Daugman — are used to find the best-fitting circle or ellipse for this boundary.

Occlusion Masking

The upper and lower eyelids often cover portions of the iris, and eyelashes cast shadows or directly occlude parts of the iris texture. The segmentation stage identifies and masks these occluded regions so they are excluded from feature extraction. Parabolic curve fitting is commonly used to model eyelid boundaries. Any iris region that is unreliable — due to eyelid, eyelash, reflection, or specular highlight occlusion — is flagged and excluded from subsequent processing.

Stage 3: Normalization

The iris region, once segmented, exists in Cartesian (x, y) image coordinates as a ring-shaped region. The size and shape of this ring vary depending on how dilated or constricted the pupil is (which changes with lighting conditions), the distance of the eye from the camera, and the angle of gaze. Before features can be extracted and compared, the iris region must be normalized to a standard format.

Daugman’s rubber sheet model is the dominant normalization approach. It maps the ring-shaped iris region to a rectangular strip of fixed dimensions — typically 64 rows by 512 columns of pixels — using a polar coordinate transformation. This mapping is pupil-dilation invariant: it stretches or compresses the iris representation proportionally based on the detected pupil and iris radii, so the resulting strip represents the same anatomical iris features regardless of pupil size.

The normalization also accounts for rotational variation — if the image is captured with the head slightly tilted, the algorithm can compensate during the matching stage by trying multiple small rotational offsets.

Stage 4: Feature Extraction — Creating the IrisCode

Feature extraction is the algorithmic heart of iris recognition. The goal is to transform the normalized iris image into a compact, discriminating representation that captures the essential identity information while discarding irrelevant variation due to lighting, imaging noise, and pupil dilation.

Daugman’s Gabor Wavelet Approach

John Daugman’s original and still-dominant approach uses 2D Gabor wavelets to extract phase information from the iris texture. Gabor wavelets are mathematical functions that are simultaneously localized in both spatial position and spatial frequency — they capture local texture patterns at specific orientations and scales, much like the receptive fields of neurons in the visual cortex.

The algorithm applies a bank of Gabor filters at different orientations and frequencies to the normalized iris strip. For each filter response at each spatial location, only the phase of the complex-valued response is recorded — not the magnitude. Phase information is highly robust to changes in illumination and contrast, since phase is largely independent of how bright or dark the image is.

Each phase value is quantized into two bits: 00 for phase in the first quadrant (0°–90°), 01 for the second (90°–180°), 10 for the third (180°–270°), and 11 for the fourth (270°–360°). A typical IrisCode contains 2,048 bits (256 bytes) of phase data, plus an equal number of mask bits indicating which portions of the code are reliable (not occluded). The total IrisCode template is typically 512 bytes.

Other Feature Extraction Approaches

While Daugman’s IrisCode is the industry standard, researchers have explored numerous alternative approaches:

- Wavelet packet decomposition: Uses a different multi-resolution analysis to extract texture features.

- Local binary patterns (LBP): Compares each pixel to its neighbors and encodes the result as a binary pattern — a fast and lightweight approach.

- Ordinal measures: Encodes the relative ordering of filter responses at different locations, which is invariant to monotonic changes in illumination.

- Deep learning (CNN-based): Convolutional neural networks trained on large iris datasets learn complex feature hierarchies automatically. CNN-based approaches have shown state-of-the-art accuracy, particularly for challenging conditions like off-angle irises or partially occluded images.

Stage 5: Template Matching — The Hamming Distance

Given two IrisCodes — one freshly captured and one stored in a database — the matching algorithm computes how similar they are. Daugman’s matching metric is the Hamming distance: the fraction of corresponding bits that differ between two IrisCodes.

If two IrisCodes come from the same iris, their Hamming distance will be very small — close to 0.0 — because the phase patterns are highly correlated. If they come from different irises, the Hamming distance will be close to 0.5 — the IrisCodes are essentially statistically independent, and their bits differ about half the time, just like two random binary strings.

The decision threshold is typically set around 0.32. A Hamming distance below this threshold is declared a match; above it is a non-match. The rotational invariance challenge is handled by computing the Hamming distance at multiple small rotational offsets of one IrisCode relative to the other and taking the minimum value — this compensates for any head tilt during capture.

The mask bits (indicating occluded regions) are ANDed together so that bits from unreliable regions in either IrisCode do not contribute to the Hamming distance calculation. This ensures that a heavily occluded iris (e.g., drooping eyelids) is still accurately matched based on the visible regions.

Statistical Power: The theoretical false match rate for IrisCode comparison at a threshold of 0.32 is approximately 1 in 1.2 million — and because iris databases are typically searched with stringent secondary checks, operational false accept rates are far lower still.

Types of Iris Scanning Systems

1. Single-Eye Stationary Scanners

The traditional form factor: a fixed device (often the size of a small monitor or terminal) that captures one iris at a time. The user positions their eye within a target zone, guided by a live preview. These are common in access control panels, time-attendance kiosks, and border control primary inspection lanes. Examples include iris scanners from IriTech, Iris ID, and Crossmatch.

2. Dual-Eye Scanners

Capture both irises simultaneously, doubling the biometric data and significantly improving accuracy and speed. Used in high-throughput environments like airport immigration halls. The probability of a false match with two irises is the square of the probability for one — approximately 1 in 10^78, making dual-iris recognition effectively infallible at any practical database scale.

3. On-the-Move (OTM) Iris Systems

Designed to capture iris images of people walking at normal pace without requiring them to stop or interact with a device. These systems use wide-baseline stereo cameras, high-speed tracking algorithms, and high-powered NIR illumination to lock onto the eyes of a moving subject and capture a usable image. Deployed in high-security checkpoints where throughput and non-intrusiveness are critical.

4. Smartphone Iris Scanners

Consumer smartphone iris cameras are miniaturized versions of dedicated iris scanners. Samsung Galaxy S and Note series devices (2016–2020) and Microsoft Lumia devices featured dedicated NIR-LED + NIR-camera modules for iris recognition. The user holds the phone at 20–35 cm from their face and looks at the front camera. Due to size constraints, smartphone iris systems use smaller sensor arrays and lower NIR power, resulting in shorter capture distances and slightly reduced accuracy compared to dedicated terminals — but still far exceeding other biometric modalities for uniqueness.

5. Long-Range and Covert Iris Systems

Specialized systems capable of capturing iris images at ranges of 3–12 meters, developed primarily for military, intelligence, and law enforcement applications. These use telephoto lenses, high-sensitivity NIR CMOS sensors, and powerful but eye-safe NIR illumination arrays. They raise significant civil liberties concerns regarding surveillance and non-consensual biometric collection.

Security Architecture and Anti-Spoofing

Liveness Detection

The primary spoofing threat to iris recognition is the presentation of a printed photograph or a digital display of an iris image. Modern systems employ multiple liveness detection techniques to distinguish a live eye from a fake:

- Pupillary light reflex: The most robust liveness check. The system flashes NIR light at varying intensities and measures whether the pupil constricts and dilates in response. A printed photograph cannot produce this dynamic response. Some systems use active illumination flickering at specific frequencies and measure the pupil’s temporal response.

- 3D depth sensing: Structured light or stereo cameras verify that the iris is on a curved, three-dimensional surface at the expected depth — ruling out flat photographs.

- Specular reflection analysis: A live eye has a characteristic corneal specular reflection pattern (the bright spot from the NIR LED) that appears at a specific location and shape. Photographs and displays produce different reflection patterns.

- Vascular pulsation: High-sensitivity NIR cameras can detect subtle changes in iris blood vessel patterns synchronized with the heartbeat — impossible to replicate in a static image.

- Texture frequency analysis: Live iris tissue has specific microscale texture frequency characteristics that differ from the printing or display artifacts of a spoofed image.

- AI-based anti-spoofing: Deep learning classifiers trained on thousands of spoof attempt examples can detect presentation attacks with very high accuracy, even against sophisticated silicone prosthetic irises.

Template Security and Encryption

Unlike a password, a biometric template cannot be changed if compromised. For this reason, iris templates require strong cryptographic protection. Modern systems use several layers of defense:

- Cancelable biometrics: The raw IrisCode is transformed using a one-way function parameterized by a user-specific key. The transformed template is stored instead of the raw IrisCode. If compromised, a new transformation key can be issued, effectively creating a new template from the same iris — analogous to changing a password.

- Fuzzy commitment schemes: Cryptographic constructs that allow verification against a stored commitment without ever decrypting or revealing the raw template.

- Secure enclaves: On smartphones and enterprise terminals, iris templates are stored and processed within hardware-isolated secure execution environments (Apple Secure Enclave, ARM TrustZone) inaccessible to the main OS.

- ISO/IEC 19794-6 compliance: An international standard defining the storage format for iris image data, enabling interoperability between different vendors’ systems while maintaining security.

Database Scale and Search Efficiency

In national-scale deployments — such as India’s Aadhaar system, which has enrolled over 1.3 billion irises — searching a query IrisCode against a database of billions of templates in real time requires extraordinary computational efficiency. Specialized hardware accelerators and multi-stage indexing strategies are used to narrow the search space before exhaustive Hamming distance computation is performed, enabling sub-second search times even at billion-record scale.

Real-World Applications of Iris Scanning

Border Control and Immigration

Iris recognition is one of the most widely deployed biometrics in border security. The United Arab Emirates was among the first countries to use iris scanning at borders in 2001. Today, iris is used in e-passport gates and automated border control systems across Europe, Asia, the Middle East, and North America. Travelers enrolled in programs like Clear (USA) and Privium (Netherlands) can bypass standard passport queues using iris verification.

National Identity Programs

India’s Aadhaar system — the world’s largest biometric database — uses iris scans alongside fingerprints to uniquely identify every Indian resident. With over 1.3 billion enrollees, Aadhaar demonstrates iris recognition operating at truly planetary scale. The system is used for welfare distribution, banking, tax administration, and healthcare access.

Smartphone Authentication

Samsung’s Galaxy Note 7 (2016) was the first mainstream smartphone with an iris scanner, followed by Galaxy S8, S9, Note 8, and S10 before Samsung transitioned to ultrasonic fingerprint and face recognition. Microsoft’s Windows Hello supports iris recognition on devices with compatible hardware, including the Surface Pro line. Iris provides a compelling alternative to face recognition in scenarios where Face ID struggles — such as while wearing a mask or in very low light.

Healthcare

Hospitals and clinics use iris recognition to positively identify patients at the point of care, ensuring medical records, medications, and procedures are matched to the correct individual. This is particularly critical for unconscious or non-communicative patients. Systems like the RightPatient platform have demonstrated significant reductions in patient misidentification errors.

Banking and Financial Services

ATM networks in several countries — including Japan, the UK (NatWest), and several Middle Eastern banks — offer iris-authenticated cash withdrawal as an alternative to PIN entry. Mobile banking apps on iris-capable devices use the onboard iris scanner for secure authentication. The non-contact nature of iris scanning makes it especially appealing in post-pandemic hygiene-conscious environments.

Criminal Justice and Law Enforcement

Law enforcement agencies use iris to identify arrestees and verify the identity of incarcerated individuals. Unlike fingerprints, which require the subject’s cooperation (touching a scanner), iris can be captured from a distance with minimal cooperation. The FBI’s Next Generation Identification (NGI) system incorporates iris as one of several biometric modalities.

Physical Access Control

High-security facilities including nuclear plants, government data centers, defense installations, pharmaceutical laboratories, and financial vaults use iris recognition as a primary or supplementary access control factor. The high accuracy and non-contact operation make iris ideal for sensitive environments requiring both security and hygiene.

Iris vs. Other Biometric Modalities

How does iris recognition compare to other widely deployed biometrics?

Iris vs. Fingerprint

Iris offers significantly higher uniqueness (266 degrees of freedom vs. ~60 for fingerprints), is non-contact, and is unaffected by manual labor or skin conditions. However, fingerprint sensors are cheaper to manufacture, more miniaturized, and have decades of consumer adoption. Both are highly accurate; iris edges out fingerprints at large database scales.

Iris vs. Face Recognition

Face recognition is more convenient — it requires no deliberate action and works at greater distances — but is less accurate and more affected by aging, expression, lighting, occlusion (masks, glasses), and pose variation. Iris recognition requires the subject to be within 20–100 cm of the scanner in most implementations, but delivers significantly lower false match rates. The two technologies are complementary and are often deployed together.

Iris vs. Retinal Scanning

Retinal scanning — a different technology that images the pattern of blood vessels on the back of the eye — is even more unique and stable than iris. However, retinal scanning requires a bright light to be directed deep into the eye, is perceived as invasive and uncomfortable, and requires very close positioning. For these reasons, retinal scanning has largely been displaced by iris recognition in commercial and government deployments.

Iris vs. DNA

DNA is the ultimate biological identifier — uniquely accurate and lifelong stable (except in rare cases of chimerism). But DNA extraction requires physical samples, laboratory analysis takes hours to days, and real-time identification is impractical. Iris recognition operates in milliseconds with no physical contact, making it the practical winner for authentication and identification scenarios.

Challenges and Limitations

Technical Challenges

- Occlusion: Heavy eyelids, drooping ptosis, or large pupils reducing iris area can degrade recognition accuracy. Patients under sedation or with eye conditions present particular challenges.

- Contact lenses: Colored or patterned contact lenses distort or mask the true iris texture, causing recognition failures or security vulnerabilities if an attacker wears a printed iris contact lens.

- Eye diseases: Conditions such as glaucoma, cataracts, iridocyclitis, and eye surgery (including LASIK) can alter the iris surface, potentially affecting recognition.

- Aging: While the iris is far more stable than the face or fingerprint, subtle long-term changes in iris texture have been documented over decades, particularly in very elderly populations. This is a research area of active interest.

- Off-angle capture: Significant gaze deviation or head tilt reduces the visible iris area and introduces perspective distortion, complicating feature extraction.

Privacy and Ethical Concerns

- Covert collection: Long-range iris systems raise the alarming possibility of silently capturing biometric data from individuals in public spaces without consent.

- Immutability: Unlike passwords or even fingerprints (which can be damaged), irises cannot be changed or revoked if biometric templates are stolen in a data breach. This makes iris template database security of paramount importance.

- Mission creep: Iris databases originally collected for immigration control or welfare programs may be subject to repurposing for law enforcement or surveillance — a concern especially acute in authoritarian contexts.

- Algorithmic bias: Research has shown that iris recognition systems can perform differently across demographic groups, particularly for populations underrepresented in training data. Ensuring equitable performance is an ongoing challenge.

- Regulatory landscape: GDPR in Europe and biometric privacy laws in the US (Illinois BIPA, Texas CUBI, etc.) impose strict requirements on the collection, storage, and use of iris data, creating compliance challenges for global deployments.

If you want the pdf version of this article it is available :

The Future of Iris Scanning Technology

Deep Learning and AI-Enhanced Recognition

Convolutional neural network approaches to iris recognition continue to advance rapidly. Models like UniqueNet and IrisParseNet achieve state-of-the-art accuracy on challenging benchmarks, handling off-angle, partially occluded, and low-quality iris images far better than traditional algorithms. On-device neural processing units in modern smartphones make sophisticated AI-based iris recognition practical without cloud connectivity.

Multispectral Iris Imaging

Using multiple NIR wavelengths (rather than a single band) provides richer texture information, particularly for highly pigmented dark irises. Multispectral systems can better distinguish the genuine iris texture from contact lens artifacts, improving both accuracy and anti-spoofing capability.

Periocular Recognition

Periocular biometrics — using the region around the eye (including the sclera, eyelids, skin texture, and eyebrow) in addition to the iris itself — provides additional discriminating information useful when the iris region is partially occluded or too small for reliable recognition. Combined periocular-iris systems are an active research area.

Continuous and Passive Authentication

Future systems may use always-on iris or gaze tracking in smart glasses, car dashboards, or computer monitors to continuously verify the user’s identity without requiring a dedicated scan — providing persistent authentication throughout a session and detecting unauthorized handoff to another person.

Contactless Multi-Biometric Fusion

The most secure and convenient authentication systems of the near future will fuse multiple biometrics — iris, face, voice, gait, and behavioral patterns — captured passively and simultaneously. No single channel need be perfect; the combination creates an authentication factor of extraordinary reliability. Iris will play a central role in this multi-modal future.

Blockchain and Decentralized Biometric Identity

Rather than centralized databases vulnerable to breach, emerging architectures store encrypted biometric commitments on distributed ledgers. Users hold their own biometric credentials, and verification occurs via zero-knowledge proofs that confirm a match without revealing the underlying template or sending it to any server. This approach offers both strong privacy guarantees and resistance to large-scale database attacks.

Conclusion

The human iris — a thin disc of pigmented tissue a little over a centimeter in diameter — holds within its microscopic texture one of nature’s most unique codes. Iris scanning technology harnesses the physics of near-infrared light, the mathematics of Gabor wavelets and Hamming distance, and the engineering of miniaturized optical systems to decode this code in milliseconds.

From John Daugman’s foundational algorithms in the early 1990s to today’s deep-learning-powered systems operating at billion-record scale, iris recognition has evolved into the most accurate and reliable biometric identification technology available to civilian and security applications.

Its combination of extraordinary uniqueness, lifelong stability, non-contact convenience, and natural liveness detection makes it uniquely suited to the challenges of identity at scale — from unlocking a smartphone to crossing an international border to proving eligibility for social services in a country of a billion people.

As the technology continues to advance — becoming faster, more accurate, longer-range, and more privacy-preserving — the quiet revolution of iris recognition will deepen its reach into every corner of the authenticated world. The eye, it turns out, is not just the window to the soul. It is the key to the digital kingdom.

— End of Article —