Quantum Computers Explained in Detail: The Future of Computing

In 2026, as we stand on the brink of a new era in technology, quantum computers are no longer just a sci-fi dream—they’re becoming tangible tools with the potential to revolutionize fields from drug discovery to cryptography. But what exactly are they? How do they work? And what does the future hold? This blog post dives deep into the mechanics of quantum computing, its current state, real-world applications, challenges, and where it’s headed. Whether you’re a tech enthusiast or just curious about the buzz, let’s break it down step by step.

The Basics: Classical Computing vs. Quantum Computing

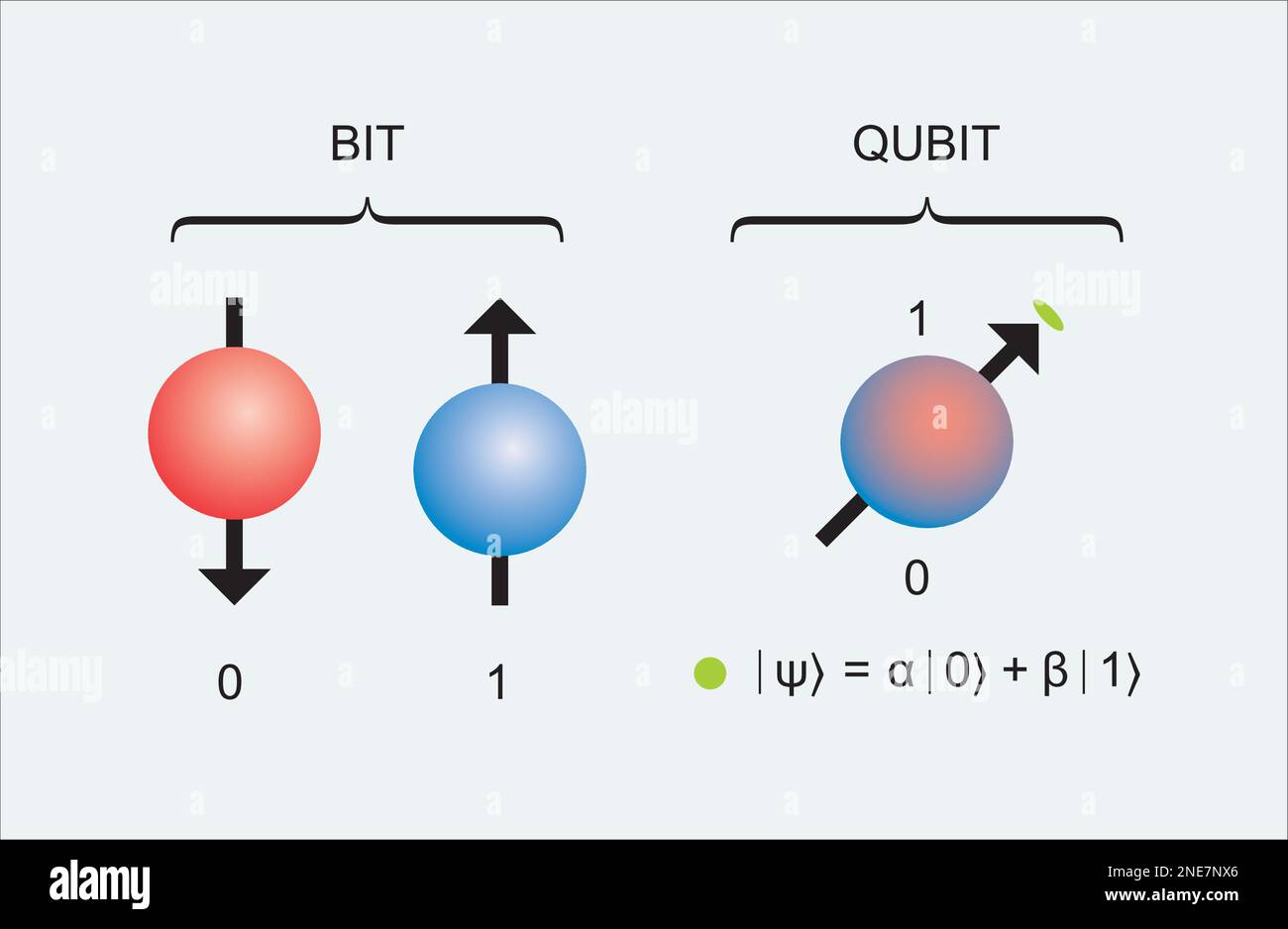

To understand quantum computers, start with what we know: classical computers. These rely on bits—binary units that are either 0 or 1. Everything from your smartphone to supercomputers processes data by flipping these bits through logic gates.

Quantum computers, however, use qubits (quantum bits). Unlike bits, qubits can exist in multiple states simultaneously thanks to quantum mechanics principles. This allows quantum systems to perform complex calculations exponentially faster for certain problems.

Key quantum principles:

- Superposition: A qubit isn’t just 0 or 1—it can be both at once, or any combination (like a spinning coin that’s heads and tails until observed). This lets a quantum computer explore many possibilities in parallel.

Qubit superposition of all the classically allowed states. Quantum bit concept representation. Visualization of qubit Stock Vector Image & Art – Alamy

- Entanglement: Qubits can be linked so the state of one instantly affects another, even across distances. This creates correlations that classical systems can’t match, enabling faster information processing.

- Interference: Quantum algorithms use wave-like behavior to amplify correct answers and cancel out wrong ones.

Here’s a quick comparison:

| Aspect | Classical Computing | Quantum Computing |

|---|---|---|

| Basic Unit | Bit (0 or 1) | Qubit (0, 1, or superposition) |

| Processing | Sequential or parallel (limited) | Massively parallel via superposition |

| Speed for Complex Problems | Scales linearly/polynomially | Exponential speedup for specific tasks |

| Examples | Everyday PCs, servers | IBM’s Eagle (127 qubits), Google’s Willow |

| Limitations | Struggles with optimization, simulation | Prone to errors, requires ultra-cold temps |

How Quantum Computers Work

Quantum computers aren’t built like classical ones. They use various technologies for qubits:

- Superconducting Loops: Used by IBM and Google—electrical circuits cooled to near absolute zero to behave quantumly.

Quantum computing: A simple introduction – Explain that Stuff

- Trapped Ions: Atoms held by lasers (e.g., IonQ, Quantinuum).

- Neutral Atoms: Emerging tech with laser-trapped atoms (e.g., Atom Computing, QuEra).

- Photonic: Light-based qubits for room-temperature potential (e.g., Xanadu).

Operations happen via quantum gates (like classical logic gates but quantum). Algorithms like Shor’s (for factoring large numbers, threatening encryption) or Grover’s (for searching databases) exploit these properties.

A quantum computation involves:

- Initializing qubits in superposition.

- Applying gates to entangle and manipulate them.

- Measuring to collapse states into classical results.

But here’s the catch: Measurement destroys superposition, so algorithms must be designed cleverly.

Current State in 2026

As of March 2026, quantum computing is in the “Noisy Intermediate-Scale Quantum” (NISQ) era—systems with hundreds of qubits but high error rates. Key advancements from 2025:

- Atom Computing and Microsoft demonstrated 24 entangled logical qubits, integrated into Azure Quantum.

- Rigetti’s Ankaa-3 aims for 99%+ fidelity; plans for 100+ qubit systems by end-2025 (now in 2026).

- AWS’s Ocelot chip uses cat qubits for better error suppression.

- NSF-funded breakthroughs: Error detection below key thresholds, 6,100 neutral-atom qubit arrays.

Hype cooled in 2025 after stock surges, with predictions of fading enthusiasm in 2026 due to delayed practical uses. Yet, experts call it the “transistor moment”—functional but needing scaling.

Major players: IBM (aiming verifiable advantage by 2026), Google, Microsoft, Rigetti, IonQ.

Real-World Applications

Quantum computers excel at problems classical ones struggle with:

- Drug Discovery & Materials Science: Simulate molecules for new drugs or batteries (e.g., faster COVID-like responses).

- Optimization: Logistics, supply chains, scheduling (e.g., airline routes).

- Cryptography: Break RSA encryption; drive quantum-safe standards.

- AI & Machine Learning: Faster training, better pattern recognition.

- Finance: Risk modeling, portfolio optimization.

- Climate Modeling: Accurate simulations for weather or carbon capture.

Early 2026 sees hybrid quantum-classical systems for initial advantages.

Challenges Facing Quantum Computing

Despite progress, hurdles remain:

- Error Rates & Decoherence: Qubits are fragile; environmental noise causes errors. Error correction needs thousands of physical qubits per logical one.

- Scalability: Building stable, large-scale systems (millions of qubits for full advantage).

- Cooling & Resources: Most require near-zero Kelvin; energy-intensive. Room-temp advances are emerging.

- Talent & Integration: Shortage of experts; hybrid setups needed.

- Security Risks: “Harvest now, decrypt later” threats urge post-quantum encryption.

Environmental concerns: High energy use, rare materials.

The Future of Computing: Quantum’s Horizon

By 2026, expect first fault-tolerant systems (e.g., Microsoft/Atom, QuEra deliveries). Early advantages in optimization, quantum-as-a-service expansion.

Longer-term (2027-2030s): Fault-tolerant machines solving real problems; IBM predicts advantage by 2026, tolerance by 2029. Hybrid dominates, with quantum enhancing AI/classical.

Quantum will transform computing, but not replace classical—it’s a complement for tough problems. Ethical focus: Responsible innovation, transparency.

Conclusion

Quantum computing is the future, blending physics and tech to tackle impossible challenges. In 2026, we’re shifting from hype to hybrid realities, with error correction paving the way. While challenges like stability persist, breakthroughs in qubits and algorithms promise a computing revolution.

Excited about quantum? Share your thoughts—will it change your field? Drop a comment!